Run detail

Subject 2-M16 — Run 2026-03-19

Date: 2026-03-19

Run note

This March 19 bundle is the current 2-M16 winning deployment candidate: cleaned training data, active-finger decoding, explicit finger-applicability gating, and zero committed or sent pair leakage.

Highlights

- The public 2-M16 bundle now tracks the cleaned March 19 combined corpus rather than the older March 18 combined session.

- The active-finger head is now paired with a dedicated finger-applicability head, so REST-side gating is modeled directly instead of being inferred from active-finger logits.

- Public holdout and replay bundles now publish applicability false-positive and false-negative rates together with the deployment pair invariant.

Changes in this bundle

- Committed and sent OPEN or CLOSE plus NONE remain zero across the published holdout and replay bundles.

- Committed and sent REST plus active-finger also remain zero because REST is forced to NONE while applicability only gates actuation.

- The tuned threshold_applicability = 0.4 setting is now reflected in deployment config, replay artifacts, report HTML, and the public website bundle.

Deployment note

The March 19 website refresh replaces the March 18 public snapshot with the current winning-model bundle. The featured figure set now emphasizes current confusion, calibration, and replay diagnostics.

Frozen live defaults

Postprocess enabled, ema (5), finger_mode=raw

threshold_action=0.05, threshold_finger=0.2, threshold_applicability=0.4, actuation_min_prob=0.2

actuation_stability=3, cooldown_ms=250, speed_modulation=on

Test action accuracy

89.79%

2,301 held-out windows

Finger accuracy on non-REST

87.01%

1,994 non-REST test windows

Primary holdout joint accuracy

84.66%

REST TPR 98.37% · applicability FN 2.26%

Pseudo-live committed joint

86.64%

Would-send precision 93.32% · false REST actuation 0.12%

Why this run won

The public March 19 bundle is the tip of a much larger tuning and validation iceberg.

The featured 2-M16 run was not chosen on a single accuracy number. It emerged from repeated retraining, postprocess ablations, holdout audits, chronological replay, and pseudo-live replay until the deployment pair invariants stayed clean while the broader replay ladder remained competitive.

2,595 configs

Postprocess ablation

The March 16, 2026 website update documents a 2,595-config ablation over thresholds, smoothing, hysteresis, adjacency, and finger-mode settings.

96 retained sweep runs

Archived Step 2 + Step 3 cycle

The preserved `logs/sweep/` CSVs retain 96 completed training-plus-evaluation runs from the broader 2-M16 tuning cycle.

100+ model variants

Documented in the older Feb 26 update

The February 26, 2026 2-M16 tuning update states that 100+ model variants were trained across full-dataset, non-REST event-gated, and REST event-gated regimes.

30+ hours

Continuous sweep time

That same February 26, 2026 update describes a 30+ hour sweep and highlights a 90-run block that spanned about 33.3 hours from February 25, 2026 07:49 to February 26, 2026 17:07.

How this run was chosen

- The March 19 checkpoint replaced the March 18 deployment candidate after the cleaned training corpus, explicit finger-applicability head, and refreshed replay bundle all aligned better than the previous public snapshot.

- Selection favored the combination of strong holdout metrics, stronger replay behavior on the cleaned deployment corpus, and zero committed or sent invalid action-finger pairs across the published holdout and replay bundles.

- The model was chosen because it behaved coherently across saved split metrics, chronological replay, and pseudo-live replay, not because it won on one leaderboard number.

- The harder March 17 realism replay is still conservative on applicability recall, but it remains part of the public selection story because it shows where the deployment stack is still weak.

How the tuning campaign evolved

- The February 26, 2026 update documents the earlier large-scale weight and hyperparameter campaign: 100+ trained variants, a 30+ hour sweep, and a largest logged 90-run block.

- The March 16, 2026 update documents the later deployment-facing postprocess ablation that froze the live default family after 2,595 evaluated configurations.

- The March 18, 2026 update widened the selection criteria from holdout accuracy alone to include full-session replay, pseudo-live behavior, and the end-to-end Step 7 control path.

- The March 19, 2026 update finalized the current winning bundle by moving to the cleaned corpus and publishing applicability diagnostics directly alongside the deployment pair invariant.

Training Recipe & Frozen Runtime

This is the deeper layer behind the public bundle: the training recipe, split policy, auxiliary data support, and frozen deployment defaults that carried the winning checkpoint into replay and pseudo-live evaluation.

Training stack

Architecture

CNNLSTMFingerActionNet

The March 19 winning run combines an action head, an active-finger head, and a dedicated finger-applicability head.

Optimization

60 epochs · batch 64 · lr 0.001 · seed 43

These values come from the winning run's training config and match the published March 19 metrics bundle.

Split policy

group_trial · test_size 0.2 · calibration_size 0.1

The holdout bundle stays tied to a fixed split while calibration is separated from the main train/test partition.

Input + preprocessing

64 x 4 windows · center_detrend

Per-window centering and detrending are frozen into the winning run's preprocessing and normalizer config.

Sampler

core_event_equalized

Training equalizes the core REST-event mass while still keeping the auxiliary quiet-rest corpus train-only.

REST support

1,059 auxiliary quiet-rest windows

The auxiliary quiet-rest session is used for train-only support while the core split contributes 11,388 windows and the public test split contributes 2,301 windows.

Replay and runtime stack

Frozen live defaults

EMA smoothing (5) · action 0.05 · finger 0.20 · applicability 0.40

The March 19 bundle freezes the same deployment-facing thresholds reflected in the replay artifacts, report HTML, and website.

Actuation gates

min_prob 0.2 · stability 3 · cooldown 250 ms

These are the saved Step 7 decision defaults for the current deployment candidate.

Replay cadence

0.25 s windows · 0.05 s hop · 10 MC passes

The pseudo-live replay runs the same checkpoint through the saved inference and actuation path at a replay cadence close to live use.

Replay latency

127.21 ms mean · 127.29 ms p95

The current cleaned-corpus pseudo-live replay logs stable prediction latency across 12,447 windows.

Would-send onset

0.083 s median · 0.317 s p95

These onset figures come from the current cleaned-corpus pseudo-live replay and are exposed in the public benchmark ladder.

Replay footprint

12,447 windows over 3,046.15 s

The cleaned deployment replay is long enough to expose transition behavior, actuation suppression reasons, and latency stability rather than only short held-out windows.

Key Metrics

The public headline metrics use the published holdout bundle. Extended evaluation cards below add replay and pseudo-live context so the reader can see how the model behaves beyond a single split.

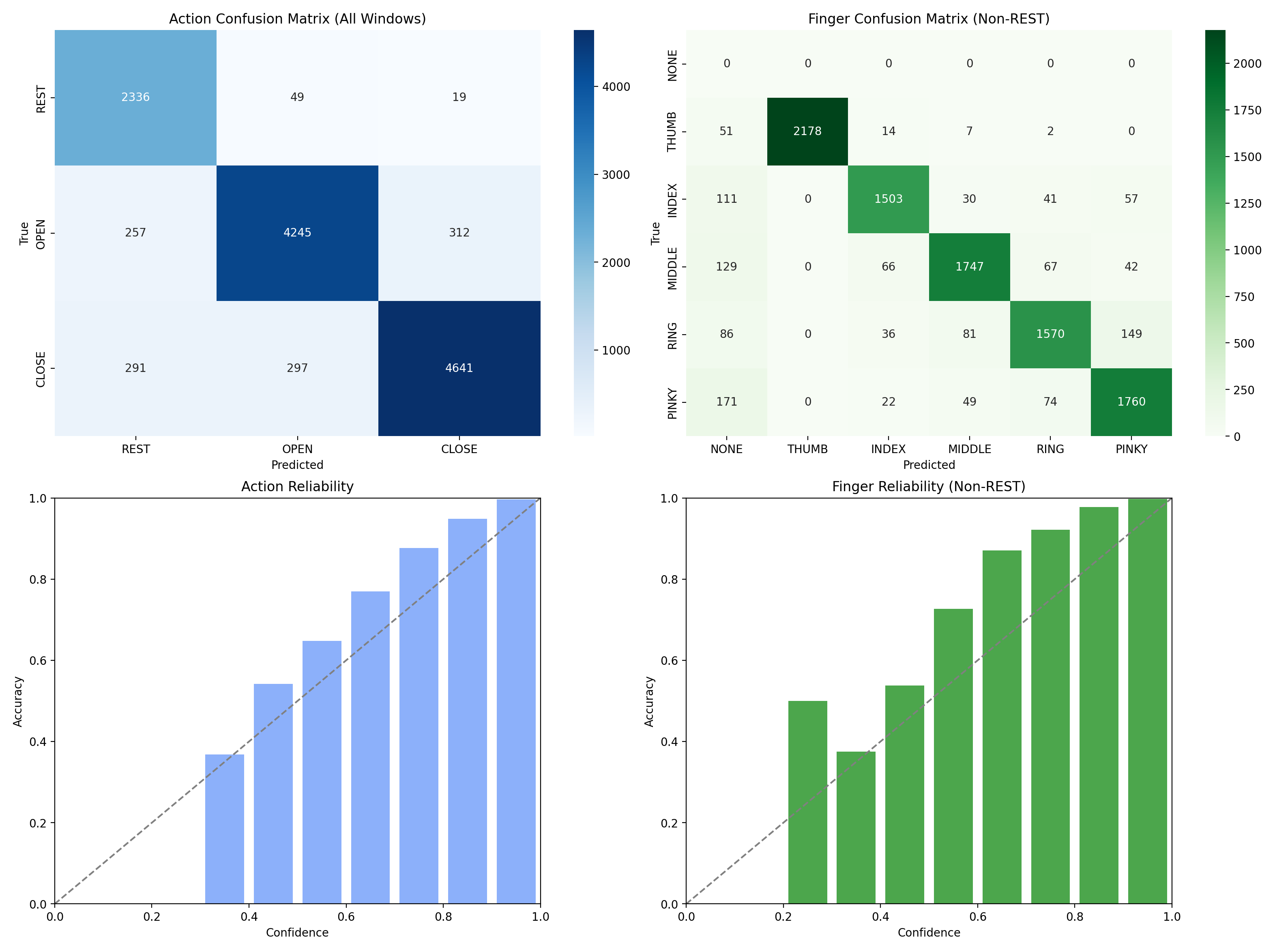

| Split | Metric | Value |

|---|---|---|

| Train | Action accuracy | 86.39% |

| Train | Finger accuracy | 86.80% |

| Train | Avg loss | 0.7714 |

| Train | Config | epochs=60, batch=64, lr=0.001, seed=43 |

| Test | Action accuracy | 89.79% |

| Test | Finger accuracy on non-REST windows | 87.01% |

| Test | Joint accuracy | 84.66% |

| Test | Joint accuracy on non-REST | 82.55% |

| Test | Finger accuracy overall | 87.61% |

| Test | REST TPR / precision / F1 | 98.37% / 80.11% / 0.883 |

| Test | REST FPR | 3.76% |

| Test | Applicability FP / FN | 18.57% / 2.26% |

| Test | Action-applicability disagreement | 3.56% |

| Test | Raw valid / invalid pair rate | 83.62% / 16.38% |

| Test | Raw non-REST NONE / raw REST active-finger | 0.00% / 16.38% |

| Test | Committed non-REST NONE / committed REST active-finger | 0.00% / 0.00% |

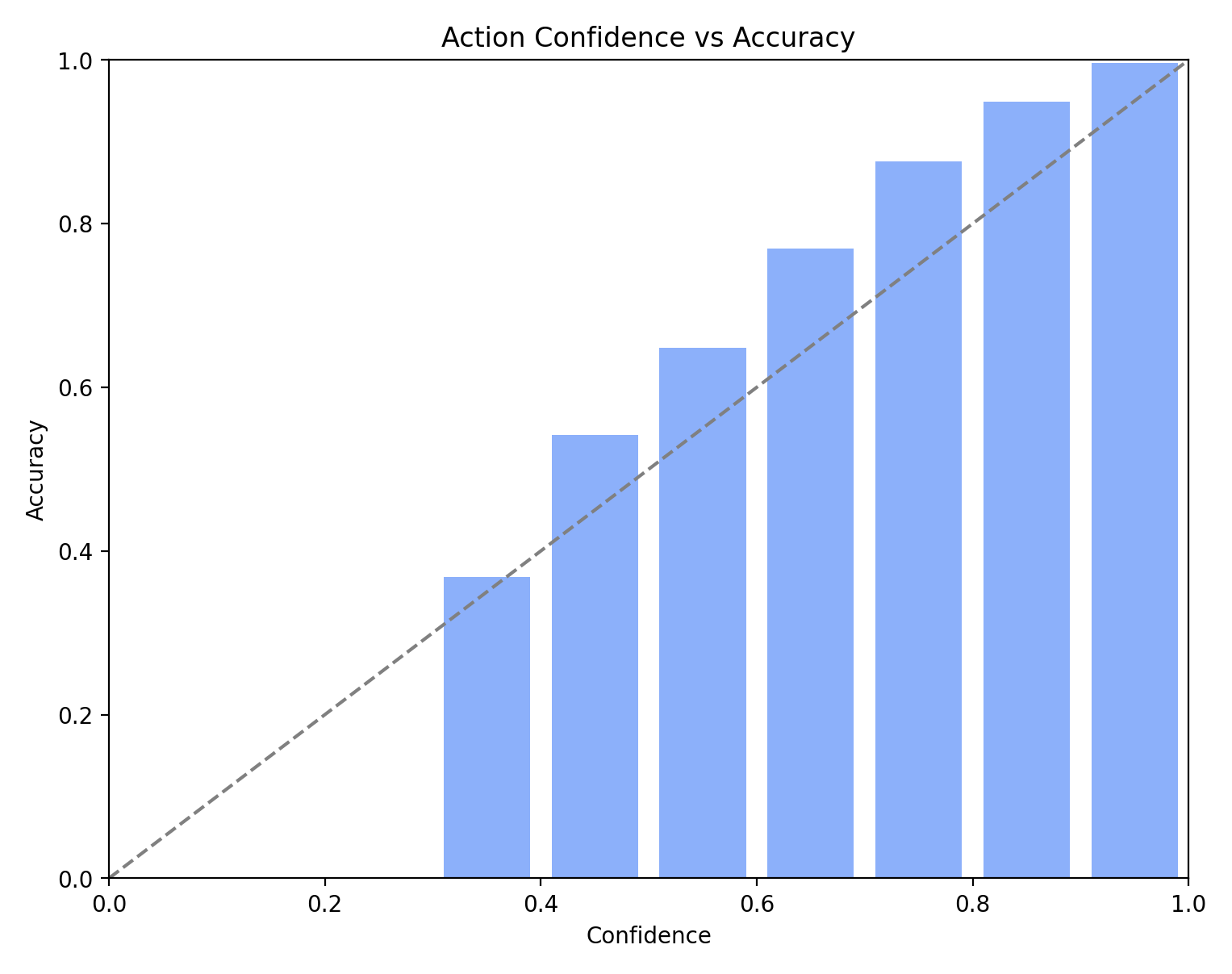

| Test | Action ECE / finger ECE on non-REST | 2.32% / 2.73% |

| Test | Deployment pair invariant | passed |

| Test | Test windows | 2,301 |

| Test | Non-REST test windows | 1,994 |

Artifacts

model=finger_action_model.pt, scaler=scaler.npz, preds=test_predictions.npz

temperature scaling=temperature_scaling.json

Source identifiers: subject=2-M16, session=combined_20260319_081200_pruned_rest_events_0_1_2, run=20260319_075520

Created UTC: 2026-03-19T08:27:08+00:00

How to read this bundle

The test row is the saved split summary. Replay and pseudo-live cards below use the same checkpoint under different evaluation conditions.

Action train-test gap: 3.39%, with test accuracy slightly higher than training accuracy.

Extended Evaluation

This section groups repeated splits, quiet-rest replay, and chronological replay for the same run.

Auxiliary quiet-REST benchmark

Target: 2-M16_20260315_145838_01

Windows: 1,059

Action accuracy: 97.26%

REST TPR: 97.26%

REST precision: 100.00%

REST F1: 0.986

Applicability FP on true REST: 4.53%

Deployment pair invariant: passed

Dedicated quiet-rest replay used to measure REST-side applicability false positives on true REST windows.

Core full-session replay

Target: 2-M16_20260216_150056_01 + 2-M16_20260317_190134

Windows: 11,388

Action accuracy: 88.48%

Joint accuracy: 84.30%

Joint accuracy on non-REST: 82.73%

Finger accuracy on non-REST: 85.71%

REST TPR: 95.99%

REST precision: 69.15%

Applicability FP on true REST: 18.07%

Applicability FN on true non-REST: 3.68%

Committed non-REST + NONE rate: 0.00%

Committed REST + active-finger rate: 0.00%

Deployment pair invariant: passed

Chronological replay across the two core movement sessions with zero committed pair leakage and explicit applicability diagnostics.

Pseudo-Live Replay

Pseudo-live replay runs the saved EEG windows through the Step 7 decision path and records what the hand would have done without contacting hardware. This is the closest public benchmark on the site to live control behavior.

Pseudo-live replay on the cleaned deployment corpus

Target: combined_20260319_081200_pruned_rest_events_0_1_2

Training source: Winning March 19 deployment checkpoint

Windows: 12,447

Committed action accuracy: 91.75%

Committed joint accuracy: 86.64%

Committed finger accuracy on non-REST: 85.78%

Applicability FP on true REST: 12.10%

Applicability FN on true non-REST: 3.68%

Would-send precision on non-REST: 93.32%

Would-send recall on non-REST: 10.57%

False REST actuation rate: 0.12%

Non-REST NONE count: 0

Committed non-REST + NONE rate: 0.00%

Committed REST + active-finger rate: 0.00%

Sent non-REST + NONE rate: 0.00%

Sent REST + active-finger rate: 0.00%

Deployment pair invariant: passed

First-send latency median / p95: 0.083 s / 0.317 s

Threshold applicability is tuned to 0.4 for the current deployment bundle.

Pseudo-live replay on the legacy combined corpus

Target: combined_20260317_211414

Training source: Winning March 19 deployment checkpoint

Windows: 12,969

Committed action accuracy: 87.95%

Committed joint accuracy: 82.98%

Committed finger accuracy on non-REST: 85.90%

Applicability FP on true REST: 27.79%

Applicability FN on true non-REST: 3.68%

Would-send precision on non-REST: 89.62%

Would-send recall on non-REST: 10.57%

False REST actuation rate: 1.71%

Non-REST NONE count: 0

Committed non-REST + NONE rate: 0.00%

Committed REST + active-finger rate: 0.00%

Sent non-REST + NONE rate: 0.00%

Sent REST + active-finger rate: 0.00%

Deployment pair invariant: passed

First-send latency median / p95: 0.083 s / 0.317 s

Regression replay against the pre-pruned March 17 combined corpus.

Pseudo-live replay on the March 17 realism session

Target: 2-M16_20260317_190134

Training source: Winning March 19 deployment checkpoint

Windows: 1,644

Committed action accuracy: 72.87%

Committed joint accuracy: 71.96%

Committed finger accuracy on non-REST: 9.72%

Applicability FP on true REST: 17.89%

Applicability FN on true non-REST: 52.98%

Would-send precision on non-REST: 62.50%

Would-send recall on non-REST: 0.99%

False REST actuation rate: 0.09%

Non-REST NONE count: 0

Committed non-REST + NONE rate: 0.00%

Committed REST + active-finger rate: 0.00%

Sent non-REST + NONE rate: 0.00%

Sent REST + active-finger rate: 0.00%

Deployment pair invariant: passed

First-send latency median / p95: 0.381 s / 0.566 s

Hard realism check remains conservative: pair invariants hold, but applicability recall is still weak on this session.

Across published runs

Compare to other runs

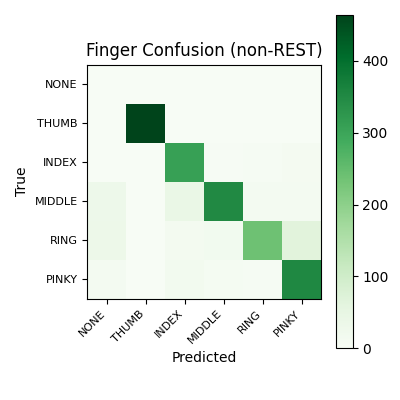

Finger accuracy is reported on non-REST windows only.

| Run | Date | Action accuracy | Finger accuracy on non-REST windows | Test windows |

|---|---|---|---|---|

| 2-m16 | 2026-03-19 | 89.79% | 87.01% | 2,301 |

| 1-m16-500 | 2026-03-05 | 83.94% | 80.61% | 2,652 |

Plain-language highlights

- Test action accuracy: 89.79%.

- Test finger accuracy on non-REST windows: 87.01%.

- Test windows: 2,301.

What this means

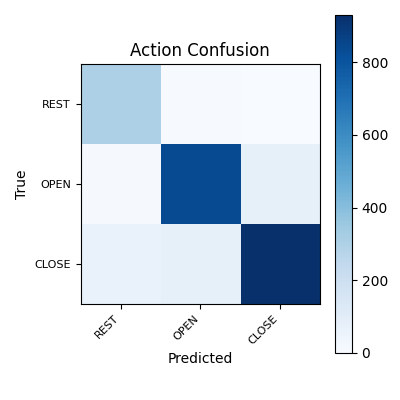

- Action accuracy measures how often held-out EEG windows were assigned the correct REST, OPEN, or CLOSE label.

- Finger accuracy on non-REST windows isolates finger classification after removing EEG windows labeled REST.

- These are EEG-window-level metrics and should not be interpreted as direct trial-level or online-control performance.

- Confusion matrices and confidence plots provide error structure that is not visible from accuracy alone.

Trust & Caveats

- The public metrics bundle does not include full per-class counts, so class imbalance is not fully characterized on-page.

- The pipeline uses overlapping windows; leakage control depends on split settings and metadata quality.

- This public bundle does not expose run-specific split mode or purge settings, so leakage risk cannot be fully ruled out for this run.

Topomaps & Signal Evidence

These alpha-band topomaps are here to show what changed physiologically, not to replace the classifier metrics. They help explain why the current deployment strategy leans on lateral Muse 2 channels and all-session training rather than a narrow single-session fit.

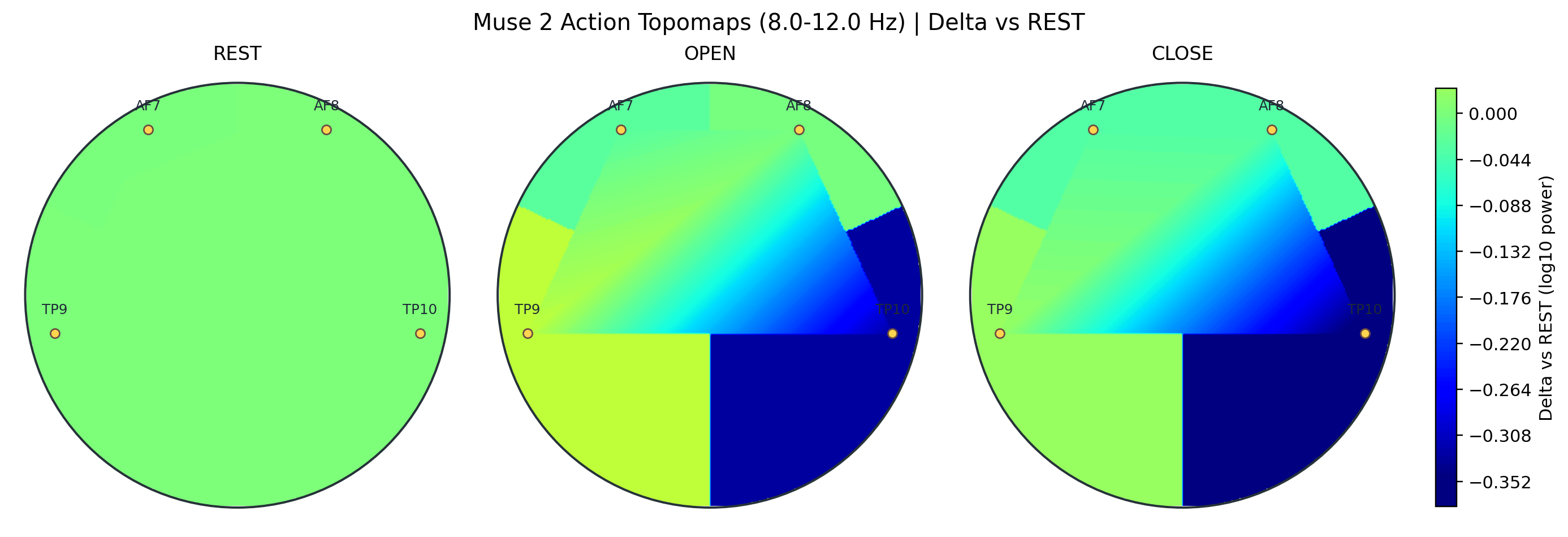

Action alpha rest-delta topomap

Rest-relative alpha maps for REST, OPEN, and CLOSE in the March 19 winning session. OPEN and CLOSE both show the dominant TP10 decrease and smaller TP9 increase that characterize the current 2-M16 action story.

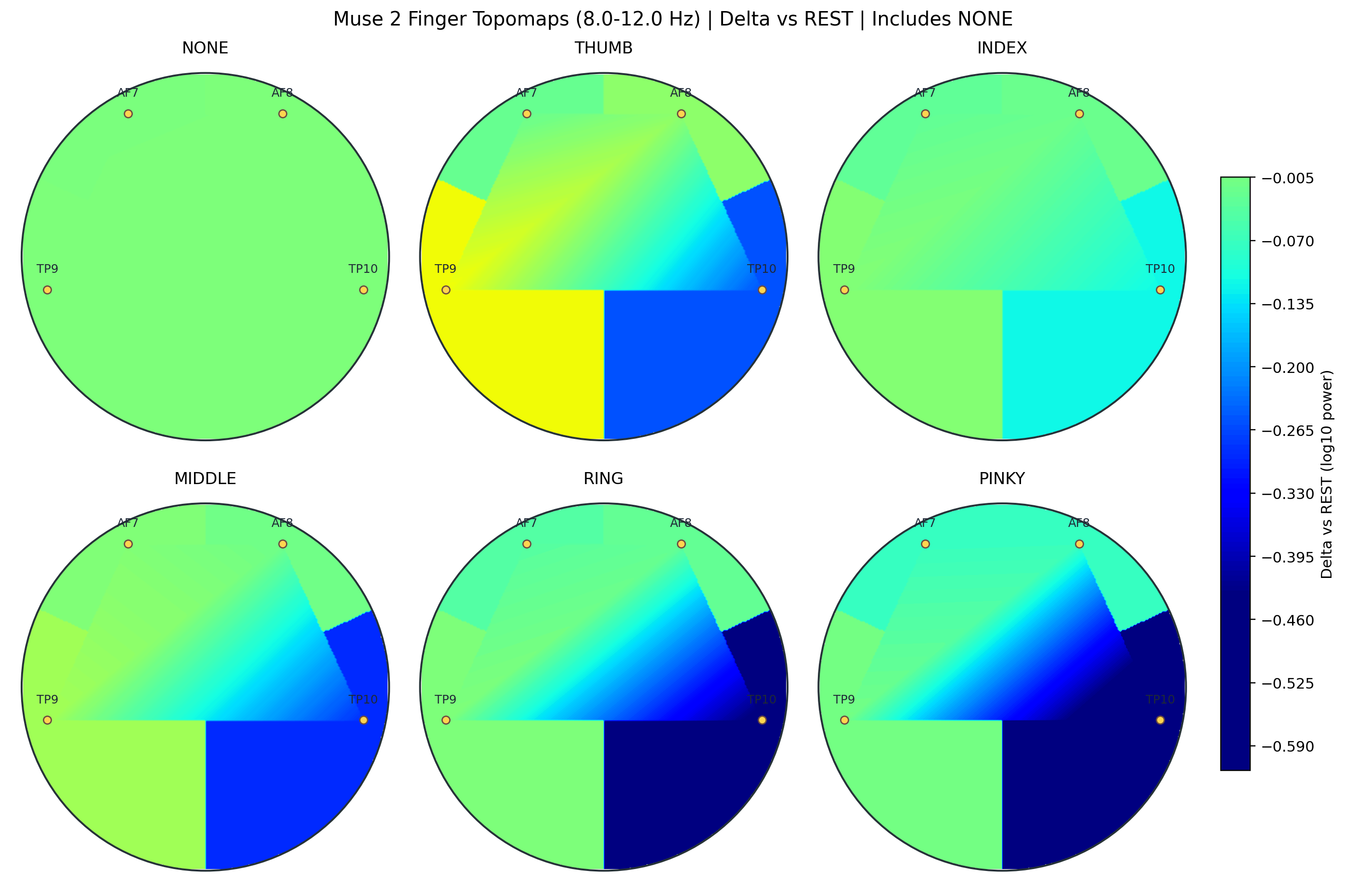

Finger alpha rest-delta topomap with NONE reference

Finger-level rest-delta maps, including NONE as the explicit REST reference. The strongest variation remains concentrated on TP10 and TP9, which helps explain why lateral Muse 2 channels carry most of the finger-separation load.

Interpretive notes

- The strongest rest-relative separations remain concentrated on the lateral Muse 2 channels rather than a broad scalp-wide shift.

- OPEN and CLOSE are highly similar in rest-relative alpha topography, so these figures are best read as signal-evidence context rather than a substitute for temporal decoding metrics.

- Finger-level variation remains strongest on TP10 and then TP9, with AF7 and AF8 changing much less.

Figures

These figures carry the structure behind the headline metrics. The confusion matrices show where the decoder drifts, while the confidence panels show whether the model's probabilities are stable enough to support conservative actuation rules.

Note: in the finger confusion matrix, REST action misses are shown as NONE. Those cells reflect true movement windows that the action head labeled REST, not deployable OPEN/CLOSE plus NONE outputs.

Action confusion matrix

Confusion matrix for action classification across REST, OPEN, and CLOSE. Rows show the actual labels, columns show the predicted labels, and off-diagonal cells show where action boundaries remain unstable.

Finger confusion matrix on non-REST windows

Confusion matrix for finger classification on non-REST windows. The diagonal shows which active fingers remain separable after REST is removed from the task.

Action calibration

Action calibration helps show whether confidence tracks observed correctness tightly enough to support conservative actuation gates and replay analysis.

Confidence calibration

Calibration bars compare predicted confidence with observed accuracy across bins. Better alignment means the model's confidence is more usable for thresholding and safety gates.

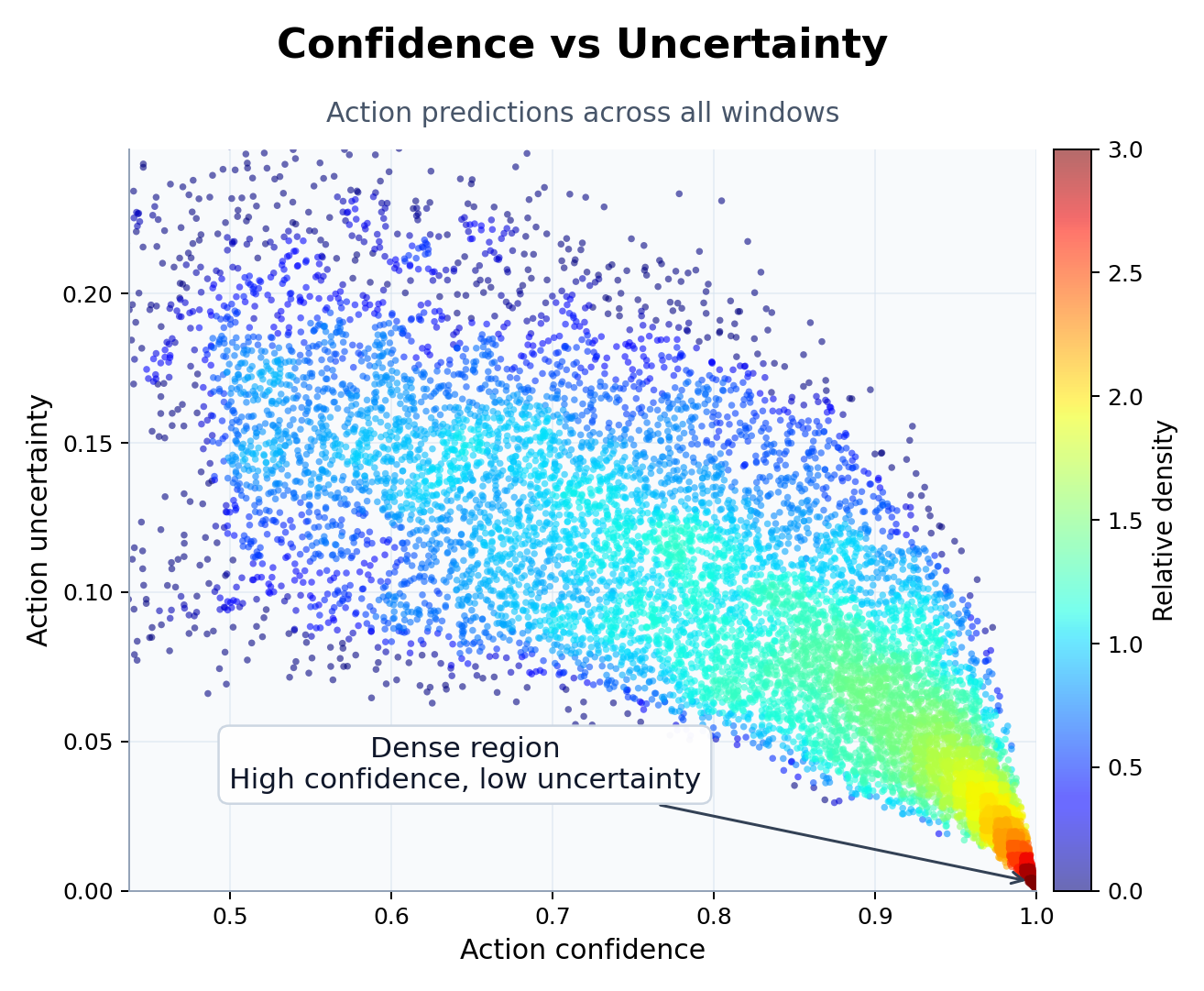

Confidence and uncertainty scatter

The uncertainty scatter shows where action predictions stay compact and where they begin to loosen. High-confidence, low-uncertainty regions are the most stable part of the decoding space.

Source trail

Follow the selection path

These links document how the project moved from broad tuning and ablation work to the current public run.

Winning-model update

March 19, 2026 refresh that publishes the current featured deployment candidate.

Deployment breakthrough update

March 18, 2026 post that expands the selection story to replay and pseudo-live behavior.

Split-fix and live-defaults update

March 16, 2026 post documenting the 2,595-config postprocess ablation.

Older tuning update

February 26, 2026 tuning post documenting 100+ model variants, a 30+ hour sweep, and the 90-run / 33.3-hour logged block.

HTML report

Full report bundle for the current public run.

Metrics JSON

Sanitized metrics bundle for the current public run.