Saved test split

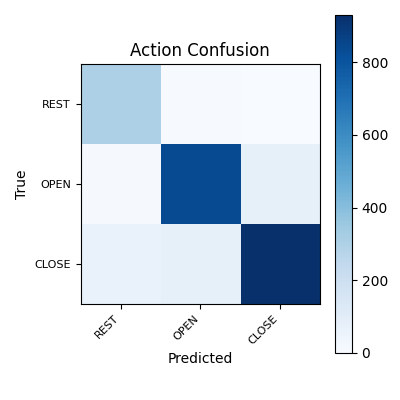

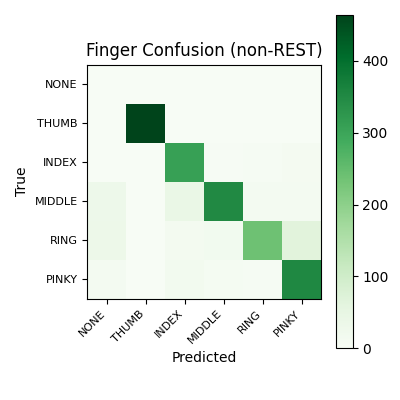

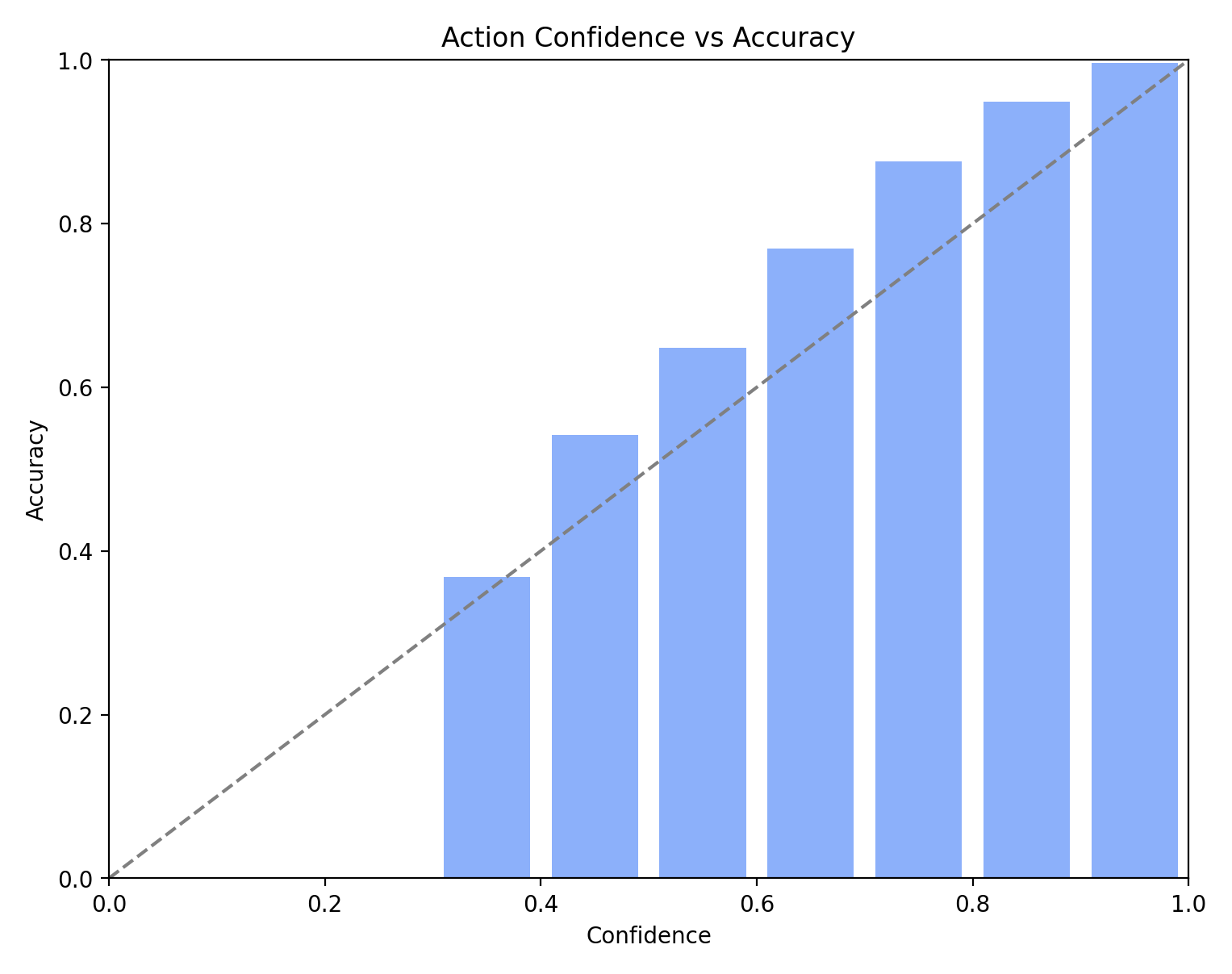

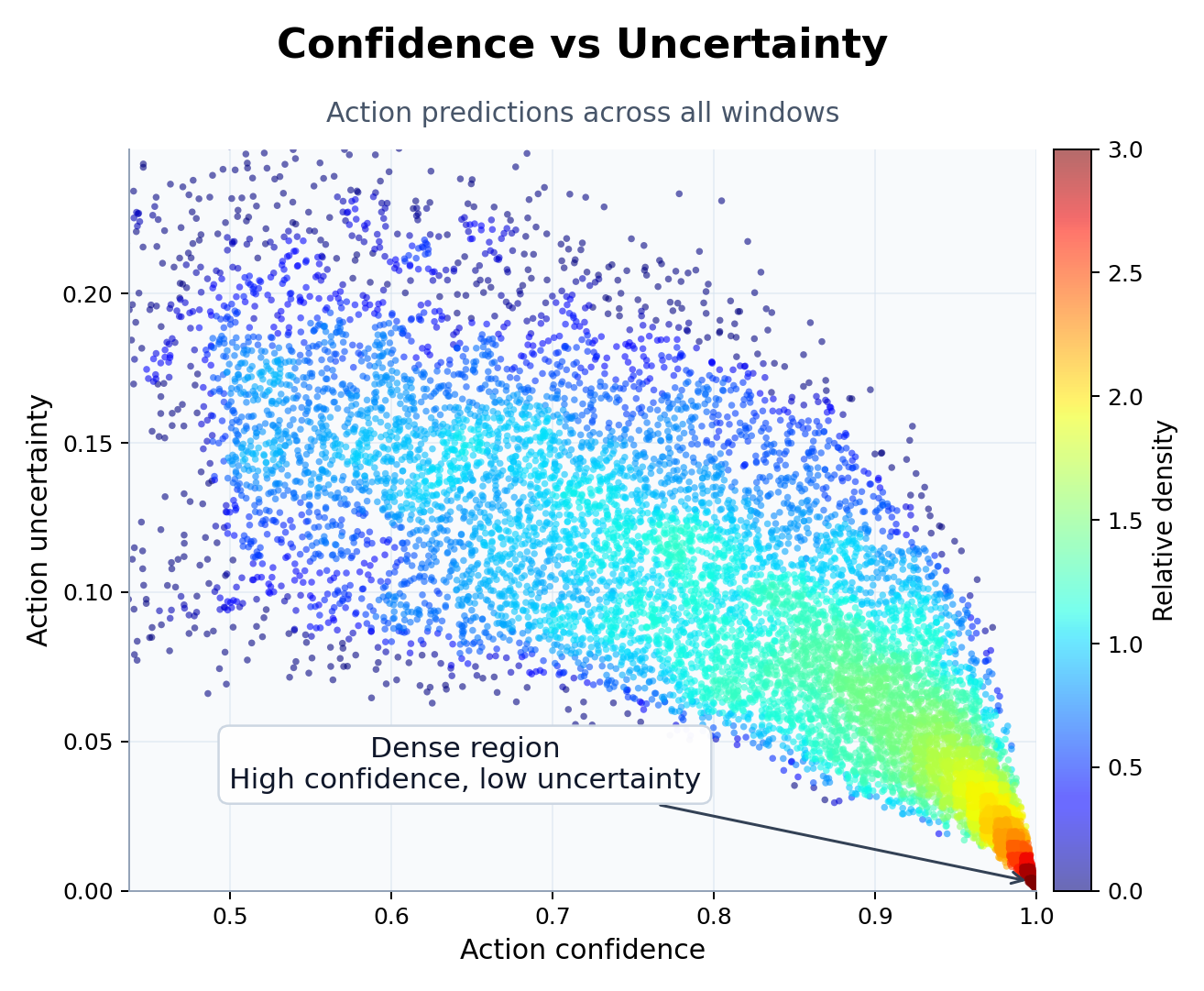

89.79% / 87.01%

Action and finger accuracy

Action accuracy is reported on all test windows; finger accuracy is reported on non-REST windows.

2,301 test windows; 1,994 non-REST

Primary holdout

84.66%

Joint accuracy with REST and applicability diagnostics

Adds REST and applicability diagnostics beyond the saved test split.

Deployment pair invariant passed; 2.26% applicability FN on true non-REST

Quiet-rest replay

97.26%

Auxiliary quiet-REST check

Separate replay used to characterize REST behavior.

4.53% applicability FP on true REST

Chronological replay

84.30%

Core full-session replay

Replay across the two core movement sessions on a longer chronological trace.

95.99% REST TPR; 18.07% applicability FP on true REST

Pseudo-live replay

86.64%

Saved Step 7 decision path

Replay through the saved decision path, including would-send precision and false REST actuation.

0.12% false REST actuation; 93.32% would-send precision

Harder replay session

71.96%

March 17 realism session

A harder pseudo-live check that exposes where applicability recall is still weak.

52.98% applicability FN on true non-REST